Preámbulo: Marco de Infraestructura y Migración a RHEL (VM Proxmox)

Este documento detalla la arquitectura necesaria para replicar la estabilidad de una Instancia de Referencia (Instancia A) en un entorno de Máquina Virtual (Instancia de Destino - Instancia B). Para que UNIT3D funcione correctamente, no basta con el código; se requiere una infraestructura de soporte robusta en todas sus capas.

1. Arquitectura de Capas (El Stack Completo)

Para evitar los errores de “Redis connection refused”, “Not Found” en Announces o “No meta found”, la infraestructura debe estar alineada desde el hardware hasta la aplicación.

Capa 1: Host y Virtualización (Proxmox / ESXi / KVM)

- Recursos: UNIT3D es intensivo en E/S de base de datos y memoria (Redis). La VM debe tener CPUs con soporte de virtualización habilitado y, preferiblemente, almacenamiento SSD/NVMe.

- Red (Bridge Mode): La VM debe operar en un bridge de red que le permita tener su propia IP en la LAN. Evitar NAT doble dentro del hipervisor para que la gestión de IPs reales sea más sencilla.

Capa 2: Sistema Operativo (RHEL / AlmaLinux / Rocky)

- Límites de Archivos: El tracker maneja miles de conexiones simultáneas. Es vital aumentar los descriptores de archivos (

ulimit -n 65535). - SELinux/Firewall: Si SELinux está en modo

Enforcing, debe configurarse para permitir que PHP-FPM se conecte a puertos de red (Redis, MySQL, Meilisearch).setsebool -P httpd_can_network_connect 1

Capa 3: Servicios de Soporte (Redis & Meilisearch)

En entornos de contenedores, estos servicios están aislados. En una VM dedicada:

- Redis: Debe estar configurado para aceptar conexiones locales y tener persistencia habilitada.

- Meilisearch: Es el motor de búsqueda. Si este servicio falla, el “Dupe Check” lanzará un Error 500.

Capa 4: El Motor de Laravel (PHP-FPM)

- Workers y Scheduler: En una VM, no hay contenedores dedicados para el Scheduler y el Worker. Deben crearse servicios de systemd para asegurar que

php artisan schedule:workyphp artisan queue:workestén siempre corriendo y se reinicien tras un fallo.

2. Guía de Troubleshooting (Verificación en todas las capas)

Si algo falla, utiliza esta cascada de comandos para encontrar el culpable:

Nivel 1: Conectividad Externa (Red)

Desde fuera de la VM:

curl -I http://tu-dominio.com/announce/test(Debe devolver algo distinto a 404 o Error de Conexión).ping <IP_VM>(Verifica que la VM responde).

Nivel 2: Servicios de Sistema

Dentro de la VM:

systemctl status redis(Verifica si Redis está vivo).systemctl status meilisearch(Verifica el motor de búsqueda).netstat -tulpn | grep -E '6379|7700|3306'(Asegúrate de que los puertos están escuchando).

Nivel 3: Estabilidad de Redis (Punto crítico de fallos de red)

Si sospechas que Redis se cae:

redis-cli monitor(Muestra en tiempo real qué está pidiendo la aplicación).redis-cli info memory(Verifica si te estás quedando sin RAM en Redis).

Nivel 4: Laravel y Workers

php artisan queue:summary(Muestra si hay trabajos atascados en la cola).tail -f storage/logs/laravel.log(El primer sitio donde mirar cuando hay un Error 500).php artisan about(Verifica que Laravel detecta Redis y la DB correctamente).

3. Condiciones para la Estabilidad

Para evitar problemas de pérdida de datos o desconexión, la VM debe cumplir:

- Persistencia de Redis: Configurar

appendonly yesenredis.conf. - Gestión de Procesos: No ejecutar los workers manualmente. Usar Supervisor o systemd.

- Trust Proxies: El

.envDEBE tenerTRUST_PROXIES=*si hay un proxy inverso delante. - Permisos de Carpeta:

storageybootstrap/cachedeben ser escribibles por el usuario que corre PHP.

Este preámbulo sirve como hoja de ruta para la migración y estabilización de la instancia de destino.

Wiki

This wiki serves as the central resource for setting up, managing, and optimizing your UNIT3D installation. Whether you’re working on local development, managing a production server, or contributing to the open-source community, you’ll find a number of useful guides here.

Backups

UNIT3D offers built in backup tools, available through the web dashboard or via Artisan commands, allowing you to create, manage, and restore your application snapshots.

1. Configuration

Customize config/backup.php in your editor and adjust settings as needed; inline notes outline the available configuration parameters.

Key structure:

-

backup-

name -

source-

filesSpecifies which directories and files to

includeand which toexcludein the backup.'include' => [ base_path(), ], 'exclude' => [ base_path(), base_path('vendor'), base_path('node_modules'), base_path('storage'), base_path('public/vendor/joypixels'), ],-

follow_links -

ignore_unreadable_directories -

relative_path

-

-

databasesSpecifies the database connections to back up.

-

-

database_dump_compressorCompressor class (e.g.

Spatie\DbDumper\Compressors\GzipCompressor::class) ornullto disable. -

destinationDefines the storage location for backup files.

-

temporary_directory

Staging directory for temporary files.

-

-

notificationsDefine when and how backup events trigger alerts via mail, Slack, or custom channels.

-

monitor_backupsDetect backup issues; triggers

UnhealthyBackupWasFoundwhen needed. -

cleanupDefine how long to keep backups and when to purge old archives.

-

strategy -

default_strategyKeeps all backups for 7 days; then retains daily backups for 16 days, weekly for 8 weeks, monthly for 4 months, and yearly for 2 years. Deletes old backups exceeding 5000 MB.

'keep_all_backups_for_days' => 7, 'keep_daily_backups_for_days' => 16, 'keep_weekly_backups_for_weeks' => 8, 'keep_monthly_backups_for_months' => 4, 'keep_yearly_backups_for_years' => 2, 'delete_oldest_backups_when_using_more_megabytes_than' => 5000,

-

-

securityEnsure that only someone with your

APP_KEYcan decrypt and restore snapshots.

2. Create a backup

You can access the built-in Backups dashboard from the Staff menu. It shows each backup’s status, health, size, and count, and lets administrators launch unscheduled full, database, or files-only backups instantly. Another approach is to use the command line.

Important

Backups initiated via the Staff Dashboard buttons may timeout on very large installations.

-

The following artisan commands are available:

php artisan backup:runCreates a timestamped ZIP of application files and database.

php artisan backup:run --only-dbCreates a timestamped ZIP containing only the database.

php artisan backup:run --only-filesCreates a timestamped ZIP containing only application files.

3. Viewing backup list

-

List existing backups:

php artisan backup:list

4. Restoring a backup

Warning

Always test backup restoration procedures on a non‑critical environment before applying to production. Incorrect restoration can lead to data loss or service disruption.

-

Install prerequisites (Debian/Ubuntu):

sudo apt update && sudo apt install p7zip-full -y -

Retrieve your application key:

grep '^APP_KEY=' /var/www/html/.env -

Extract the outer archive (enter APP_KEY when prompted):

7z x [UNIT3D]YYYY-MM-DD-HH-MM-SS.zip -

Unzip inner archive, if generated:

7z x backup.zip

Note

Full backups will contain two parts; the files backup and a database backup or dump file.

Restoring the files backup:

-

Copy restored files to webroot:

sudo cp -a ~/tempBackup/var/www/html/. /var/www/html/ -

Fix file permissions:

sudo chown -R www-data:www-data /var/www/html sudo find /var/www/html -type f -exec chmod 664 {} \; sudo find /var/www/html -type d -exec chmod 775 {} \;

Restoring the database backup:

-

Retrieve your database credentials:

grep '^DB_' /var/www/html/.env -

Restore your database:

mysql -u unit3d -p unit3d < ~/tempBackup/db-dumps/mysql-unit3d.sql

5. Reset & Cleanup

After restoring backups, ensure to reset configurations and clean up temporary files to maintain system integrity.

-

Fix permissions:

sudo chown -R www-data:www-data /var/www/html sudo find /var/www/html -type f -exec chmod 664 {} \; sudo find /var/www/html -type d -exec chmod 775 {} \; -

Reinstall Node dependencies & rebuild (if assets need it):

sudo rm -rf node_modules && sudo bun install && sudo bun run build -

Clear caches & restart services:

cd /var/www/html sudo php artisan set:all_cache sudo systemctl restart php8.5-fpm sudo php artisan queue:restart

6. Cloud Backup Sync (Google Drive)

The local backup system described above stores snapshots on the host filesystem. An optional rclone layer complements this by syncing those snapshots to an encrypted Google Drive remote (gdrive_crypt:), providing an off-site cold-backup tier. Syncs run via an ephemeral Docker container defined in rclone_gdrive/docker-compose.yml and can be automated with a cron job. For full setup and restore instructions, see Google Drive Backup Sync.

Basic tuning

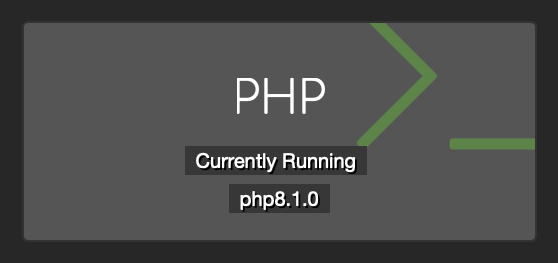

Important

These guides are intended for UNIT3D v8.0.0 + instances. While these are better than defaults be careful blindly following them. Proper tuning requires understanding your server, running tests and monitoring the results.

Redis single server

| Category | Severity | Time To Fix |

|---|---|---|

| :rocket: Performance | Major | 30 minutes |

Introduction

If your Redis service is running on your web server, it is highly recommended that you use Unix sockets instead of TCP ports to communicate with your web server.

Based on the Redis official benchmark, you can improve performance by up to 50% using unix sockets (versus TCP ports) on Redis.

Of course, unix sockets can only be used if both your Laravel application and Redis are running on the same server.

How to enable unix sockets

First, create the redis folder that the unix socket will be in and set appropriate permissions:

sudo mkdir -p /var/run/redis/

sudo chown -R redis:www-data /var/run/redis

sudo usermod -aG redis www-data

Next, add the unix socket path and permissions in your Redis configuration file (typically at /etc/redis/redis.conf):

unixsocket /var/run/redis/redis.sock

unixsocketperm 770

Finally, set your corresponding env variables to the socket path as above:

REDIS_HOST=/var/run/redis/redis.sock

REDIS_PORT=-1

REDIS_SCHEME=unix

Ensure that you have your config/database.php file refer to the above variables (notice the scheme addition below):

'redis' => [

'client' => env('REDIS_CLIENT', 'phpredis'),

'options' => [

'scheme' => env('REDIS_SCHEME', 'tcp'),

],

'default' => [

'url' => env('REDIS_URL'),

'host' => env('REDIS_HOST', '127.0.0.1'),

'password' => env('REDIS_PASSWORD', null),

'port' => env('REDIS_PORT', '6379'),

'database' => env('REDIS_DB', '0'),

],

'cache' => [

'url' => env('REDIS_URL'),

'host' => env('REDIS_HOST', '127.0.0.1'),

'password' => env('REDIS_PASSWORD', null),

'port' => env('REDIS_PORT', '6379'),

'database' => env('REDIS_CACHE_DB', '1'),

],

],

Once that’s all done simply restart redis.

sudo systemctl restart redis

References

Note

Keep in mind that when using unix socket you will now connect to redis-cli in terminal like so:

redis-cli -s /var/run/redis/redis.sock

MySQL single server

| Category | Severity | Time To Fix |

|---|---|---|

| :rocket: Performance | Major | 10 minutes |

Introduction

If your MySQL database is running on your web server, it is highly recommended that you use Unix sockets instead of TCP ports to communicate with your web server.

Based on Percona’s benchmark, you can improve performance by up to 50% using unix sockets (versus TCP ports on MySQL).

Of course, unix sockets can only be used if both your UNIT3D application and database are running on the same server which is by default.

How to enable unix sockets

First, open your MySQL configuration file.

nano /etc/mysql/my.cnf

Then, uncomment and change (if needed) the socket parameter in the [mysqld] section of one of the above configuration files:

[mysqld]

user = mysql

pid-file = /var/run/mysqld/mysqld.pid

socket = /var/run/mysqld/mysqld.sock

port = 3306

Close this file, then ensure that the mysqld.sock file exists by running an ls command on the directory where MySQL expects to find it:

ls -a /var/run/mysqld/

If the socket file exists, you will see it in this command’s output:

Output

. .. mysqld.pid mysqld.sock mysqld.sock.lock

If the file does not exist, the reason may be that MySQL is trying to create it, but does not have adequate permissions to do so. You can ensure that the correct permissions are in place by changing the directory’s ownership to the mysql user and group:

sudo chown mysql:mysql /var/run/mysqld/

Then ensure that the mysql user has the appropriate permissions over the directory. Setting these to 775 will work in most cases:

sudo chmod -R 755 /var/run/mysqld/

Finally, set your database.connections.mysql.unix_socket configuration variable or the corresponding env variable to the socket path as above:

DB_SOCKET=/var/run/mysqld/mysqld.sock

Once that’s all done simply refresh your cache and then restart the services.

php artisan set:all_cache

sudo systemctl restart mysql && sudo systemctl restart php8.3-fpm && sudo systemctl restart nginx

References

Composer autoloader optimization

| Category | Severity | Time To Fix |

|---|---|---|

| :rocket: Performance | Moderate | 5 minutes |

Introduction

Due to the way PSR-0/4 autoloading rules are defined, the Composer autoloader checks the filesystem before resolving a classname conclusively.

In production, Composer allows for optimization to convert the PSR-0 and PSR-4 autoloading rules into classmap rules, making autoloading quite a bit faster. In production we also don’t need all the require-dev dependencies loaded up!

How to optimize?

It’s really simple. SSH to your server and run the following commands.

composer install --prefer-dist --no-dev

composer dump-autoload --optimize

References

- https://getcomposer.org/doc/articles/autoloader-optimization.md

PHP8 OPCache

| Category | Severity | Time To Fix |

|---|---|---|

| :rocket: Performance | Major | 10 minutes |

Introduction

Opcache provides massive performance gains. One of the benchmarks suggest it can provide a 5.5X performance gain in a Laravel application!

What is OPcache?

Every time you execute a PHP script, the script needs to be compiled to byte code. OPCache leverages a cache for this bytecode, so the next time the same script is requested, it doesn’t have to recompile it. This can save some precious execution time, and thus make UNIT3D faster.

Sounds awesome, so how can you use it?

Easy. SSH to your server and run the following command. sudo nano /etc/php/8.3/fpm/php.ini This is assuming your on PHP 8.3. If not then adjust the command. Once you have the config open search for opcache.

Now you can change some values, I will walk you through the most important ones.

opcache.enable=1

This of course, enables OPcache for php-fpm. Make sure it is uncommented. AKA remove the;

opcache.enable_cli=1

This of course, enables OPcache for php-cli. Make sure it is uncommented. AKA remove the;

opcache.memory_consumption=256M

How many Megabyte you want to assign to OPCache. Choose anything higher than 64 (default value) depending on your needs. 2GB is sufficient but if have more RAM then make use of it! Make sure it is uncommented. AKA remove the;

opcache.interned_strings_buffer=64

How many Megabyte you want to assign to interned strings. Choose anything higher than 16 (default value). 1GB is sufficient but if have more RAM then make use of it! Make sure it is uncommented. AKA remove the;

opcache.validate_timestamps=0

This will revalidate the script. If you set this to 0 (best performance), you need to manually clear the OPcache every time your PHP code changes. So if you update UNIT3D using php artisan git:update or manually make changes yourself you need to run sudo systemctl restart php8.2-fpm afterwords for your changes to take effect and show. Make sure it is uncommented. AKA remove the;

opcache.save_comments=1

This will preserve comments in your script, I recommend to keep this enabled, as some libraries depend on it, and I couldn’t find any benefits from disabling it (except from saving a few bytes RAM). Make sure it is uncommented. AKA remove the;

And there you have it folks. Experiment with these values, depending on the resources of your server. Save the file and exit and restart PHP sudo systemctl restart php8.3-fpm.

Enjoy! 🖖

PHP 8 preloading

| Category | Severity | Time To Fix |

|---|---|---|

| :rocket: Performance | Major | 5 minutes |

Introduction

This is chaining off Want More Performance? Lets talk about OPCache! guide. You must have OPCache enabled to use preloading.

PHP preloading for PHP >=7.4. Preloading is a feature of php that will pre-compile php functions and classes to opcache. Thus, this becomes available in your programs with out needing to require the files, which improves speed. To read more on php preloading you can see the opcache.preloading documentation.

Enabling preloading

SSH to your server and run the following command. sudo nano /etc/php/8.3/fpm/php.ini This is assuming your on PHP 8.3. If not then adjust the command. Once you have the config open search for preload.

Now you can change some values.

; Specifies a PHP script that is going to be compiled and executed at server

; start-up.

; https://php.net/opcache.preload

opcache.preload = '/var/www/html/preload.php';

; Preloading code as root is not allowed for security reasons. This directive

; facilitates to let the preloading to be run as another user.

; https://php.net/opcache.preload_user

opcache.preload_user=ubuntu

As you can see we are calling the preload file included in UNIT3D located in /var/www/html/preload.php.

opcache.preload_user=ubuntu you should changed to your server user. Not root!!!!

And there you have it folks. Save the file and exit and restart PHP sudo systemctl restart php8.3-fpm. You are now preloading Laravel thus making UNIT3D faster.

PHP8 JIT

| Category | Severity | Time To Fix |

|---|---|---|

| :rocket: Performance | Moderate | 5 minutes |

Introduction

PHP 8 adds a JIT compiler to PHP’s core which has the potential to speed up performance dramatically.

First of all, the JIT will only work if opcache is enabled, this is the default for most PHP installations, but you should make sure that opcache.enable is set to 1 in your php.ini file. Enabling the JIT itself is done by specifying opcache.jit_buffer_size in php.ini. So I recommend checking the OPcache guide I made first then coming back here.

How to enable JIT

SSH to your server and run the following command. sudo nano /etc/php/8.3/fpm/php.ini This is assuming your on PHP 8.2. If not then adjust the command. Once you have the config open search for opcache.jit.

If you do not get any results then search for [curl] you should see the following.

[curl]

; A default value for the CURLOPT_CAINFO option. This is required to be an

; absolute path.

;curl.cainfo =

Right above it add:

opcache.jit_buffer_size=256M

Its as simple as that. Save and exit and restart PHP. sudo systemctl restart php8.2-fpm

PM static

| Category | Severity | Time To Fix |

|---|---|---|

| :rocket: Performance | Major | 10 minutes |

Important

This guide is intended for high traffic sites.

Introduction

Lets give a basic description on what these options are:

pm = dynamic – the number of child processes is set dynamically based on the following directives: pm.max_children, pm.start_servers,pm.min_spare_servers, pm.max_spare_servers.

pm = ondemand – the processes spawn on demand (when requested, as opposed to dynamic, where pm.start_servers are started when the service is started.

pm = static – the number of child processes is fixed by pm.max_children.

The PHP-FPM pm static setting depends heavily on how much free memory your server has. Basically if you are suffering from low server memory, then pm ondemand or dynamic maybe be better options. On the other hand, if you have the memory available you can avoid much of the PHP process manager (PM) overhead by setting pm static to the max capacity of your server. In other words, when you do the math, pm.static should be set to the max amount of PHP-FPM processes that can run without creating memory availability or cache pressure issues. Also, not so high as to overwhelm CPU(s) and have a pile of pending PHP-FPM operations.

Enabling static

Lets open up our PHP configuration file. sudo nano /etc/php/8.3/fpm/pool.d/www.conf

Set pm = static

Set pm.max_children = 25

Save, Exit and Restart sudo systemctl restart php8.3-fpm

Conclusion

When it comes to PHP-FPM, once you start to serve serious traffic, ondemand and dynamic process managers for PHP-FPM can limit throughput because of the inherent overhead. Know your system and set your PHP-FPM processes to match your server’s max capacity.

UNIT3D development on Arch Linux with Laravel Sail

A guide by EkoNesLeg

This guide outlines the steps to set up UNIT3D using Laravel Sail on Arch Linux. While the guide highlights the use of Arch Linux, the instructions can be adapted to other environments.

Important

This guide is intended for local development environments only and is not suitable for production deployment.

Modifying .env and secure headers for non-HTTPS instances

For local development, HTTP is commonly used instead of HTTPS. To prevent mixed content issues, adjust your .env file as follows:

- Create the

.envConfig:-

Create a

.envfile in the root directory of your UNIT3D project. -

Copy and paste the contents from

.env.exampleinto the.envfile. -

Add or modify the following environment variables:

DB_HOST=mysql # Match the container name in the compose file DB_USERNAME=unit3d # The username can be anything except `root` SESSION_SECURE_COOKIE=false # Disables secure cookies REDIS_HOST=redis # Match the container name in the compose file CSP_ENABLED=false # Disables Content Security Policy HSTS_ENABLED=false # Disables Strict Transport Security

-

Prerequisites

Ensure Docker and Docker Compose are installed, as they are required for managing the Dockerized development environment provided by Laravel Sail.

Installing Docker and Docker Compose

Refer to the Arch Linux Docker documentation and install the necessary packages:

sudo pacman -S docker docker-compose

Step 1: clone the repository

Clone the UNIT3D repository to your local environment:

-

Navigate to your chosen workspace directory:

cd ~/PhpstormProjects -

Clone the repository:

git clone git@github.com:HDInnovations/UNIT3D.git

Step 2: Composer dependency installation

-

Change to the project’s root directory:

cd ~/PhpstormProjects/UNIT3D -

Install Composer dependencies:

Run the following command to install the PHP dependencies:

composer install

Step 3: Docker environment initialization

-

Switch to branch

development:Before starting Docker, switch to the

developmentbranch:git checkout development -

Start the Docker environment using Laravel Sail:

./vendor/bin/sail up -d

Step 4: app key generation

Generate a new APP_KEY in the .env file for encryption:

./vendor/bin/sail artisan key:generate

Note: If you are importing a database backup, make sure to set the APP_KEY in the .env file to match the key used when the backup was created.

Step 5: database migrations and seeders

Initialize your database with sample data by running migrations and seeders:

./vendor/bin/sail artisan migrate:fresh --seed

Important

This operation resets your database and seeds it with default data. Avoid running this in a production environment.

Step 6: database preparation

Initial database loading

Prepare your database with the initial schema and data. Make sure you have a database dump file, such as prod-site-backup.sql.

MySQL data importation

Import your database dump into MySQL within the Docker environment:

./vendor/bin/sail mysql -u root -p unit3d < prod-site-backup.sql

Note: Ensure that the APP_KEY in the .env file matches the key used in your deployment environment for compatibility.

Step 7: NPM dependency management

Manage Node.js dependencies and compile assets within the Docker environment:

./vendor/bin/sail bun install

./vendor/bin/sail bun run build

If needed, refresh the Node.js environment:

./vendor/bin/sail rm -rf node_modules && bun pm cache rm && bun install && bun run build

Step 8: application cache configuration

Optimize the application’s performance by setting up the cache:

./vendor/bin/sail artisan set:all_cache

Step 9: environment restart

Apply new configurations or restart the environment by toggling the Docker environment:

./vendor/bin/sail restart && ./vendor/bin/sail artisan queue:restart

Additional notes

- Permissions: Use

sudocautiously to avoid permission conflicts, particularly with Docker commands that require elevated access.

Appendix: Sail commands for UNIT3D

This section provides a reference for managing and interacting with UNIT3D using Laravel Sail.

Docker management

-

Start environment:

./vendor/bin/sail up -dStarts Docker containers in detached mode.

-

Stop environment:

./vendor/bin/sail downStops and removes Docker containers.

-

Restart environment:

./vendor/bin/sail restartApplies changes by restarting the Docker environment.

Dependency management

-

Install Composer dependencies:

./vendor/bin/sail composer installInstalls PHP dependencies defined in

composer.json. -

Update Composer dependencies:

./vendor/bin/sail composer updateUpdates PHP dependencies defined in

composer.json.

Laravel Artisan

-

Run migrations:

./vendor/bin/sail artisan migrateExecutes database migrations.

-

Seed database:

./vendor/bin/sail artisan db:seedSeeds the database with predefined data.

-

Refresh database:

./vendor/bin/sail artisan migrate:fresh --seedResets and seeds the database.

-

Cache configurations:

./vendor/bin/sail artisan set:all_cacheClears and caches configurations for performance.

NPM and assets

-

Install NPM dependencies:

./vendor/bin/sail bun installInstalls Node.js dependencies.

-

Compile assets:

./vendor/bin/sail bun run buildCompiles CSS and JavaScript assets.

Database operations

- MySQL interaction:

Opens MySQL CLI for database interaction../vendor/bin/sail mysql -u root -p

Queue management

- Restart queue workers:

Restarts queue workers after changes../vendor/bin/sail artisan queue:restart

Troubleshooting

-

View logs:

./vendor/bin/sail logsDisplays Docker container logs.

-

Run PHPUnit (PEST) tests:

./vendor/bin/sail artisan testRuns PEST tests for the application.

UNIT3D v8.x.x on MacOS with Laravel Sail and PhpStorm

A guide by HDVinnie

This guide is designed for setting up UNIT3D, a Laravel application, leveraging Laravel Sail on MacOS.

Warning: This setup guide is intended for local development environments only and is not suitable for production deployment.

Modifying .env and secure headers for non-HTTPS instances

For local development, it’s common to use HTTP instead of HTTPS. To prevent mixed content issues, follow these steps:

-

Modify the

.envconfig:- Open your

.envfile located in the root directory of your UNIT3D project. - Find the

SESSION_SECURE_COOKIEsetting and change its value tofalse. This action disables secure cookies, which are otherwise required for HTTPS.

SESSION_SECURE_COOKIE=false - Open your

-

Adjust the secure headers in

config/secure-headers.php:- Navigate to the

configdirectory and open thesecure-headers.phpfile. - To disable the

Strict-Transport-Securityheader, locate thehstssetting and change its value tofalse.

'hsts' => false,- Next, locate the Content Security Policy (CSP) configuration to adjust it for HTTP. Disable the CSP to prevent it from blocking content that doesn’t meet the HTTPS security requirements.

'enable' => env('CSP_ENABLED', false), - Navigate to the

Prerequisites

Installing Docker Desktop

Once installed, launch Docker Desktop

Installing GitHub Desktop

Once installed, launch GitHub Desktop

Installing PHPStorm

Once installed, launch PHPStorm

Step 1: clone the repository

Firstly, clone the UNIT3D repository to your local environment by visiting UNIT3D Repo. Then click the blue colored code button and select Open with Github Desktop. Once Github Desktop is open set you local path to clone to like /Users/HDVinnie/Documents/Personal/UNIT3D

Step 2: open the project in PHPStorm

Within PHPStorm goto File and then click Open. Select the local path you just did like /Users/HDVinnie/Documents/Personal/UNIT3D.

The following commands are run in PHPStorm. Can do so by clicking Tools->Run Command.

Step 3: start Sail

Initialize the Docker environment using Laravel Sail:

./vendor/bin/sail up -d

Step 4: Composer dependency installation

./vendor/bin/sail composer install

Step 5: Bun dependency install and compile assets

./vendor/bin/sail bun install

./vendor/bin/sail bun run build

Step 6: database migrations and seeders

For database initialization with sample data, apply migrations and seeders:

./vendor/bin/sail artisan migrate:fresh --seed

Caution: This operation will reset your database and seed it with default data. Exercise caution in production settings.

Step 7: database preparation (if want to use a production database backup locally)

Initial database loading

Prepare your database with the initial schema and data. Ensure you have a database dump file,

e.g., prod-site-backup.sql.

MySQL data importation

To import your database dump into MySQL within the local environment, use:

./vendor/bin/sail mysql -u root -p unit3d < prod-site-backup.sql

Note: For this to work properly you must set the APP_KEY value in your local .env file to match you prod APP_KEY value.

Step 8: application cache configuration

Optimize the application’s performance by setting up the cache:

sail artisan set:all_cache

Step 9: visit local instance

Open your browser and visit localhost. Enjoy!

Additional notes

- Permissions: Exercise caution with

sudoto avoid permission conflicts, particularly for Docker commands requiring elevated access.

Appendix: Sail commands for UNIT3D

This section outlines commands for managing and interacting with UNIT3D using Laravel Sail.

Sail management

-

Start environment:

./vendor/bin/sail up -dStarts Docker containers in detached mode.

-

Stop environment:

./vendor/bin/sail down -vStops and removes Docker containers.

-

Restart environment:

./vendor/bin/sail restartApplies changes by restarting Docker environment.

Dependency management

-

Install Composer dependencies:

./vendor/bin/sail composer installInstalls PHP dependencies defined in

composer.json. -

Update Composer dependencies:

./vendor/bin/sail composer updateUpdates PHP dependencies defined in

composer.json.

Laravel Artisan

-

Run migrations:

./vendor/bin/sail artisan migrateExecutes database migrations.

-

Seed database:

./vendor/bin/sail artisan db:seedSeeds database with predefined data.

-

Refresh database:

./vendor/bin/sail artisan migrate:fresh --seedResets and seeds database.

-

Cache configurations:

./vendor/bin/sail artisan set:all_cacheClears and caches configurations for performance.

NPM and assets

-

Install Bun dependencies:

./vendor/bin/sail bun installInstalls Node.js dependencies.

-

Compile assets:

./vendor/bin/sail bun run buildCompiles CSS and JavaScript assets.

Database operations

- MySQL interaction:

Opens MySQL CLI for database interaction../vendor/bin/sail mysql -u root -p

Queue management

- Restart queue workers:

Restarts queue workers after changes../vendor/bin/sail artisan queue:restart

Troubleshooting

-

View logs:

./vendor/bin/sail logsDisplays Docker container logs.

-

PHPUnit (PEST) tests:

./vendor/bin/sail artisan testRuns PEST tests for application.

Manual de Configuración y Auditoría de UNIT3D (Instancia B)

Este documento ha sido generado tras un análisis exhaustivo del código fuente de UNIT3D Community Edition, con el fin de diagnosticar y proponer soluciones para la instancia “Instancia B” en comparación con la instancia de referencia “Instancia A”.

1. El Crash del “Dupe Check” (Error 500)

Análisis de la Lógica de Comprobación de Duplicados

En UNIT3D, la comprobación de duplicados ocurre en dos frentes: la interfaz web y la API.

- Interfaz Web (Livewire): Se utiliza el componente

app/Http/Livewire/SimilarTorrent.php(archivo válido de ejemplo) que realiza búsquedas dinámicas basadas en IDs de metadatos (TMDB, IGDB). - Sistema de Validación (FormRequests): El archivo principal que maneja la validación de subidas es

app/Http/Requests/StoreTorrentRequest.php(archivo ya configurado). - API (Controller): El controlador

app/Http/Controllers/API/TorrentController.php(archivo válido de ejemplo) maneja las subidas vía API (/api/torrents/upload).

Rastreo del Error 500

El Error 500 (Internal Server Error) suele ocurrir cuando una excepción no es capturada por el framework o cuando hay un fallo crítico en una dependencia. Tras analizar el código, hemos identificado los siguientes puntos críticos:

A. Validación del Infohash y el Controlador de API

En el método store de API/TorrentController.php, la validación se realiza de la siguiente manera:

// app/Http/Controllers/API/TorrentController.php:219

'info_hash' => [

'required',

Rule::unique('torrents')->whereNull('deleted_at'),

],

Si la base de datos en Instancia B tiene problemas de integridad, o si el controlador de base de datos lanza una excepción inesperada durante esta comprobación (por ejemplo, fallos en el motor de búsqueda Meilisearch), el servidor devolverá un HTML de Error 500 en lugar de un JSON de error de validación.

B. Fallo en el Helper de Bencode

El proceso de “Dupe Check” requiere decodificar el archivo .torrent para extraer el info_hash. Esto se hace mediante app/Helpers/Bencode.php (archivo ya configurado).

Si la extensión de PHP theodorejb/polycast no está correctamente instalada o si hay una incompatibilidad con PHP 8.4 en el entorno de la VM, cualquier llamada a Bencode::get_infohash() fallará catastróficamente.

C. Meilisearch como Driver de Búsqueda

Si Instancia B tiene configurado SCOUT_DRIVER=meilisearch pero el servicio Meilisearch no es accesible o tiene una clave de API incorrecta, la función filter de la API (usada frecuentemente por scripts externos para comprobar si un torrent ya existe antes de subirlo) fallará con un 500.

// app/Http/Controllers/API/TorrentController.php:631

$paginator = Torrent::search(...)

D. ERROR DETECTADO: Desincronización de Parámetros en TorrentSearchFiltersDTO

El log de errores de Instancia B confirma un fallo crítico por un parámetro desconocido:

Unknown named parameter $genreIds at app/Http/Controllers/API/TorrentController.php:595

Esto sucede porque el controlador de la API intenta instanciar el DTO de filtros con parámetros que la versión del código en Instancia B no reconoce. A continuación, mostramos la configuración correcta en la instancia de referencia (Instancia A) para su comparación:

1. Instanciación en el Controlador de API (app/Http/Controllers/API/TorrentController.php):

584: $filters = new TorrentSearchFiltersDTO(

585: name: $request->filled('name') ? $request->string('name')->toString() : '',

...

595: genreIds: $request->filled('genres') ? array_map('intval', $request->genres) : [],

...

617: );

2. Definición del Constructor del DTO (app/DTO/TorrentSearchFiltersDTO.php) (archivo válido de ejemplo):

31: public function __construct(

32: private string $name = '',

...

51: private array $genreIds = [],

...

86: ) {

Diagnóstico: En Instancia B, es probable que se haya actualizado el TorrentController pero no el archivo TorrentSearchFiltersDTO.php, o viceversa, dejando al sistema con una firma de método incompatible. Esto rompe cualquier llamada al endpoint /api/torrents/filter, devolviendo el Error 500 mencionado.

Variables y Base de Datos Implicadas

- Tabla

torrents: Camposinfo_hash(único) yname(único). - Variable

.env:SCOUT_DRIVER(si esmeilisearch, es un punto de fallo crítico). - Variable

.env:MEILISEARCH_HOSTyMEILISEARCH_KEY. - Memoria Temporal: El sistema guarda el archivo temporalmente en

Storage::disk('torrent-files'). Si la carpetastorage/app/torrentsno tiene permisos de escritura, la subida fallará antes de llegar al check de duplicados.

2. Conexiones de Pares - “Not Found” y Estadísticas a Cero

Análisis del Endpoint de Announce y Scrape

En UNIT3D, los clientes torrent se comunican con el tracker a través de una URL estructurada:

http://tu-dominio.com/announce/{passkey}.

Si un cliente devuelve un error “Not Found” (404), significa que la petición ni siquiera está llegando a procesarse por la lógica interna del tracker o que el framework no encuentra una ruta que coincida.

Diagnóstico del Error “Not Found”

Basándonos en el código de UNIT3D, estas son las causas más probables:

A. Error en la Ruta de Announce (routes/announce.php) (archivo válido de ejemplo)

La ruta está definida de forma muy estricta. Si el cliente no envía la passkey exactamente como el tracker la espera (32 caracteres hexadecimales), o si hay un prefijo mal configurado en el servidor web (Nginx), Laravel devolverá un 404.

// routes/announce.php:33

Route::get('{passkey}', [App\Http\Controllers\AnnounceController::class, 'index'])->name('announce');

B. Fallo en la Validación del Usuario o Passkey

Dentro de AnnounceController (archivo válido de ejemplo), cualquier fallo en la identificación del usuario o del torrent lanza una TrackerException (archivo válido de ejemplo). Si el sistema está mal configurado, el cliente torrent puede interpretar ciertas respuestas de error de red como un “Not Found” genérico.

- Passkey Inexistente: Si la passkey no está en la base de datos (Error 140).

- Torrent No Registrado: Si el infohash que envía el cliente no existe en la tabla

torrents(Error 150).

C. ERROR DETECTADO: Redis Inestable o Inaccesible

Los logs de Instancia B muestran tres estados críticos que confirman el fallo de Redis:

RedisException: Connection refused: Fallo total al intentar conectar.RedisException: Redis server 127.0.0.1:6379 went away: La conexión se pierde durante la ejecución.Scheduled command [auto:update_user_last_actions] failed with exit code [1]: Este comando falla específicamente porque depende de Redis para leer las acciones pendientes de los usuarios (Redis::command('LLEN', ...)).

Impacto Directo:

- Fallo de Announce: El tracker bloquea conexiones al no poder validar el “throttling”.

- Scheduler Paralizado: Al fallar los comandos (exit code 1) por culpa de Redis, el sistema no puede realizar mantenimiento básico.

- Estadísticas a Cero: Al no ejecutarse el Scheduler ni los comandos de volcado (

AutoUpsertPeers), la base de datos MySQL nunca recibe la información de los pares activos.

Diagnóstico Final de Infraestructura: El servicio Redis en Instancia B no es confiable. Al ser un entorno RHEL en VM, se debe verificar si el socket de Redis está saturado, si hay un firewall local (iptables/nftables) cerrando conexiones prematuramente, o si el servicio redis-server se está reiniciando constantemente por falta de RAM.

Si el tracker no recibe (o no puede procesar) los paquetes de “announce” de los clientes, nunca se ejecutan los jobs que actualizan las estadísticas.

- Fallo de Announce: El cliente no puede conectar -> No hay datos.

- Workers de Sistema: UNIT3D depende de comandos programados para “volcar” los datos de Redis a la base de datos MySQL.

AutoUpsertPeers: Mueve los pares de la caché a la DB.AutoUpsertHistories: Actualiza el historial de subida/bajada. Si estos comandos no se están ejecutando (vía Scheduler/Cron), la web mostrará 0 seeds y 0 leecher aunque el tracker esté funcionando técnicamente.

3. Topología de Red y Enrutamiento (Proxmox vs Docker)

La importancia de la IP Real

Para un tracker privado, la IP real del usuario es crítica: se usa para el sistema de baneo, para el límite de conexiones por torrent y para asegurar que el usuario no está haciendo trampas. Si el tracker recibe la IP interna del Proxy (ej. 172.18.0.1 o 10.0.0.1), fallará la lógica de conexión y de seguridad.

Diferencias de Entorno

- Instancia A (Docker): Los contenedores suelen estar tras una red bridge de Docker. UNIT3D confía en el proxy inverso (Nginx/Traefik) para pasar la IP original.

- Instancia B (Proxmox/VM): Al ser una VM con IP dedicada, es probable que esté recibiendo tráfico directamente o a través de un router/firewall que puede estar enmascarando las IPs si no se configura el “Hairpin NAT” o el paso de cabeceras correctamente.

Mapeo de Flujo (Los 15 Elementos)

Para que la IP llegue intacta al código PHP, la cadena debe estar configurada así:

- Usuario Final: Inicia la petición.

- Firewall/Router (Proxmox Host): Debe redirigir los puertos 80/443 a la VM.

- Load Balancer (Opcional): Si existe, debe añadir

X-Forwarded-For. - Reverse Proxy (Nginx/NPM/Traefik): Captura la IP y la pasa al backend.

- Cabecera

X-Real-IP: Nginx debe tenerproxy_set_header X-Real-IP $remote_addr;. - Cabecera

X-Forwarded-For: Nginx debe tenerproxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;. - PHP-FPM: Recibe las cabeceras del servidor web.

- Laravel (TrustProxies): El middleware debe confiar en el Proxy para leer estas cabeceras.

- Redis: Almacena temporalmente la IP del par.

- MariaDB/MySQL: Guarda la IP en la tabla

peers. - Middleware

BlockIpAddress: Comprueba si la IP real está baneada. - Middleware

TrustProxies.php: Configurado para aceptar proxies. - AnnounceController: Extrae la IP empaquetada con

inet_pton. - Logs de Laravel: Deben mostrar la IP real en caso de error.

- Sistema de Auditoría: Registra las acciones vinculadas a esa IP.

Código Implicado y Configuración

En la instancia de referencia, el middleware TrustProxies.php está configurado para confiar en todos los proxies (*), lo cual es común en entornos con balanceadores de carga dinámicos.

Configuración en app/Http/Middleware/TrustProxies.php (archivo ya configurado):

22: class TrustProxies extends Middleware

23: {

24: /**

25: * The trusted proxies for this application.

26: *

27: * @var array<int, string>|string|null

28: */

29: protected $proxies = '*';

30:

31: /**

32: * The headers that should be used to detect proxies.

33: *

34: * @var int

35: */

36: protected $headers = RequestAlias::HEADER_X_FORWARDED_FOR | RequestAlias::HEADER_X_FORWARDED_HOST | RequestAlias::HEADER_X_FORWARDED_PORT | RequestAlias::HEADER_X_FORWARDED_PROTO | RequestAlias::HEADER_X_FORWARDED_AWS_ELB;

37: }

Configuración de Nginx (.docker/nginx/default.conf) (archivo ya configurado):

Es vital que el servidor web pase las variables correctamente al proceso PHP:

22: location ~ \.php$ {

23: fastcgi_pass app:9000;

24: fastcgi_param SCRIPT_FILENAME $realpath_root$fastcgi_script_name;

25: fastcgi_param REMOTE_ADDR $http_x_real_ip;

26: fastcgi_param HTTP_X_REAL_IP $http_x_real_ip;

27: fastcgi_param HTTP_X_FORWARDED_FOR $proxy_add_x_forwarded_for;

28: include fastcgi_params;

29: }

4. Todas las Configuraciones Cruciales (El Iceberg Completo)

Documentamos los motores internos que mantienen el tracker vivo. Si estos servicios fallan o están mal configurados, el tracker “morirá en silencio” (la web cargará, pero nada se actualizará).

A. Caché y Redis (El Corazón del Rendimiento)

Redis no es opcional en UNIT3D; se usa para:

- Throttling: Limitar las peticiones de los clientes torrent.

- Peers Temporales: Almacenar los anuncios de los clientes antes de volcarlos a SQL.

- Cache de Configuración: Evitar miles de consultas a la DB para leer los ajustes del sitio.

Configuración Crítica en config/database.php (archivo válido de ejemplo):

UNIT3D utiliza múltiples bases de datos Redis (0-5) para evitar colisiones:

'redis' => [

'default' => ['database' => env('REDIS_DB', '0')],

'cache' => ['database' => env('REDIS_CACHE_DB', '1')],

'job' => ['database' => env('REDIS_JOB_DB', '2')],

'announce' => ['database' => env('REDIS_ANNOUNCE_DB', '5')],

],

Punto de Fallo en Instancia B: El log confirma que Redis está devolviendo Connection refused. Esto es un fallo total de la infraestructura. Sin Redis, UNIT3D no puede ni siquiera arrancar las tareas programadas (schedule:run) ni procesar anuncios de pares. Es vital revisar si el servicio Redis está corriendo en la VM o si los datos de REDIS_HOST, REDIS_PORT y REDIS_PASSWORD en el .env son correctos.

B. Workers y Colas (Queues)

UNIT3D delega tareas pesadas a procesos en segundo plano.

- Ruta de los Jobs:

app/Jobs/ - Job Crítico:

ProcessAnnounce.php. Este job procesa los anuncios de los clientes torrent de forma asíncrona. - Configuración: En producción,

QUEUE_CONNECTIONdebe serredis. Si está ensync, el tracker irá extremadamente lento. - Supervisor: Se debe usar un gestor de procesos como Supervisor o systemd para asegurar que

php artisan queue:workesté siempre corriendo.

C. Tareas Programadas (Cron/Scheduler)

El archivo app/Console/Kernel.php (archivo válido de ejemplo) define qué ocurre y cada cuánto tiempo.

AutoUpsertPeers(cada 5 seg): Vuelca los pares de Redis a MySQL. Sin esto, la web muestra 0 peers.AutoUpdateUserLastActions(archivo ya configurado) (cada 5 seg): Actualiza la última vez que se vio a un usuario.AutoNerdStat(cada hora): Calcula estadísticas avanzadas.BackupCommand(diario): Realiza copias de seguridad automáticas.

Configuración en app/Console/Kernel.php:

70: $schedule->command(AutoUpsertPeers::class)->everyFiveSeconds()->withoutOverlapping(2);

71: $schedule->command(AutoUpsertHistories::class)->everyFiveSeconds()->withoutOverlapping(2);

D. WebSockets / Broadcasting

Usado para notificaciones en tiempo real y el chat (Shoutbox).

- Driver: UNIT3D suele usar

Pusher(compatible con Reverb o self-hosted websockets). - Configuración:

BROADCAST_DRIVERen el.env.

E. Tabla de Variables de Entorno Vitales (.env)

| Variable | Valor Recomendado | Descripción |

|---|---|---|

APP_ENV | production | Activa optimizaciones y oculta errores detallados. |

APP_DEBUG | false | CRÍTICO: Debe estar en false en Instancia B. |

CACHE_DRIVER | redis | Usa Redis para la caché global. |

QUEUE_CONNECTION | redis | Ejecuta tareas en segundo plano. |

DB_CONNECTION | mysql | Conexión principal a la base de datos. |

SCOUT_DRIVER | meilisearch | Motor para búsquedas y filtros (Punto de fallo Error 500). |

MEILISEARCH_HOST | http://meilisearch:7700 | URL del servicio Meilisearch. |

REDIS_HOST | redis | Host de Redis. |

TRUST_PROXIES | * | CRÍTICO: Define en qué IPs de proxy se confía para leer la IP real del usuario. |

5. Topología de Red y Real IP (Docker vs Pública)

El Problema: Al visualizar perfiles de usuario o logs en una instancia recién instalada de UNIT3D en Docker, es común que todas las conexiones aparezcan con la IP interna de la red de Docker (ej: 172.21.0.1). Esto ocurre porque el servidor web Nginx, al actuar como proxy interno, reporta la IP del gateway de Docker en lugar de la IP real del cliente que llega desde el exterior (Traefik, Nginx externo o el propio host). Esto inutiliza funciones de seguridad (baneos por IP), detección de duplicados y estadísticas de red.

Análisis Técnico:

Cuando una petición llega desde internet, suele pasar por esta cadena:

Usuario -> Proxy Externo (Nginx/Traefik) -> Nginx Contenedor -> PHP-FPM

Si no se configura correctamente, cada salto “enmascara” la IP original. El Nginx interno del contenedor ve la IP del Proxy Externo, y PHP-FPM ve la IP del contenedor Nginx.

Configuración Maestra en esta instancia:

Para solucionar esto, en esta instancia se han aplicado cambios en dos niveles:

A. Nginx Interno (Paso de Cabeceras a PHP)

En el archivo nginx_default.conf (archivo ya configurado) (ubicado originalmente en .docker/nginx/default.conf), se ha modificado el bloque que gestiona PHP para forzar la lectura de la IP real:

22: location ~ \.php$ {

23: fastcgi_pass app:9000;

24: fastcgi_param SCRIPT_FILENAME $realpath_root$fastcgi_script_name;

25: fastcgi_param REMOTE_ADDR $http_x_real_ip;

26: fastcgi_param HTTP_X_REAL_IP $http_x_real_ip;

27: fastcgi_param HTTP_X_FORWARDED_FOR $proxy_add_x_forwarded_for;

28: include fastcgi_params;

29: }

- Línea 25 (

fastcgi_param REMOTE_ADDR $http_x_real_ip;): Esta es la clave. Sobreescribe la variableREMOTE_ADDRde PHP con el contenido de la cabecera HTTPX-Real-IP(que Nginx recibe como$http_x_real_ip). De esta forma, cualquier función de Laravel que pida la IP del cliente recibirá la IP real.

B. Laravel (Confianza en el Proxy)

En el archivo TrustProxies.php (archivo ya configurado) (ubicado en app/Http/Middleware/TrustProxies.php), se define en qué proxies se confía para leer estas cabeceras:

29: protected $proxies = '*';

- Línea 29: Al usar

'*', Laravel confiará en cualquier IP que le envíe cabeceras de proxy. Esto es necesario en entornos Docker donde las IPs de los proxies pueden ser dinámicas.

Guía de Implementación (Fresh Install):

Si estás configurando una instancia de cero y ves IPs 172.x.x.x en los perfiles:

- Configurar Proxy Externo: Asegúrate de que tu Nginx principal (el que está fuera de Docker) o Traefik esté enviando la IP real. En Nginx externo (Host) sería:

proxy_set_header X-Real-IP $remote_addr; proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for; - Modificar Nginx Interno: Edita el archivo

.docker/nginx/default.confde tu repositorio UNIT3D y añade la líneafastcgi_param REMOTE_ADDR $http_x_real_ip;dentro del bloquelocation ~ \.php$. - Verificar Middleware Laravel y .env: Abre

app/Http/Middleware/TrustProxies.phpy asegúrate de que$proxiestenga el valor'*'o que lea deenv('TRUST_PROXIES', '*'). AñadeTRUST_PROXIES=*a tu archivo.env. - Aplicar Cambios: Reinicia el contenedor de Nginx:

docker compose restart web - Verificación Final: Entra en el panel de administración o en tu perfil de usuario y comprueba que la IP que aparece coincide con tu IP pública actual.

6. El “Iceberg” de los Metadatos (TMDB, IMDB, Portadas)

El Problema: En Instancia B, los torrents recién subidos aparecen con el mensaje “No meta found” y sin carátulas ni descripciones, a pesar de que el usuario proporciona los IDs de TMDB o IMDB. En la instancia de referencia (Instancia A), esto funciona de forma automática e instantánea.

Análisis Técnico del Flujo de Metadatos: El sistema de metadatos en UNIT3D no es una consulta simple a una base de datos; es una tubería (pipeline) compleja que involucra múltiples capas:

A. Iniciación (Controllers)

Cuando se sube un torrent (TorrentController@store) o se solicita una actualización manual (SimilarTorrentController@update), el sistema llama al servicio TMDBScraper.

- Archivos Implicados:

TMDBScraper.php(archivo ya configurado)

B. Segundo Plano (Queues y Jobs)

El TMDBScraper no descarga los datos directamente para no bloquear la web. En su lugar, pone un “trabajo” en la cola: ProcessMovieJob o ProcessTvJob (archivo ya configurado).

- Archivos Implicados:

ProcessMovieJob.php(archivo ya configurado) - Punto de Fallo Crítico (Redis): Estos jobs utilizan un limitador de velocidad (Rate Limiter) para no ser baneados por TMDB.

Este limitador depende obligatoriamente de Redis. Como hemos diagnosticado en el Punto 2 que el Redis de Instancia B está caído o es inestable, cualquier job de metadatos morirá al intentar comprobar el límite de velocidad. Sin Redis funcionando al 100%, no habrá metadatos.// ProcessMovieJob.php:62 new RateLimited(GlobalRateLimit::TMDB),

C. El Cliente de API (External Fetch)

Si el worker logra ejecutar el job, este utiliza un cliente HTTP para consultar la API de TheMovieDB.

- Archivos Implicados:

MovieClient.php(archivo válido de ejemplo) - Configuración Vital: Requiere una clave válida en el

.env.TMDB_API_KEY=tu_clave_aqui

D. Almacenamiento y Visualización

Una vez descargados, los datos se guardan en las tablas tmdb_movies o tmdb_tv. Las portadas y backdrops no se descargan al servidor local; UNIT3D almacena la ruta relativa y genera una URL directa al CDN de TMDB (image.tmdb.org).

- Lógica de Visualización:

TorrentMeta.php(archivo ya configurado) (Trait usado por los modelos para inyectar los metadatos en las vistas).

Diagnóstico y Pasos para Reparar Instancia B:

Si ves “No meta found”, sigue este orden de revisión:

- Estado de Redis (Prioridad 1): Como se vio en el Punto 2, Redis falla. Si Redis no funciona, el

RateLimiterbloquea los jobs de metadatos. Repara Redis primero. - Workers de Cola: Asegúrate de que los workers están procesando la cola

default.php artisan queue:work --queue=default - Clave de API: Verifica que

TMDB_API_KEYen el.envde Instancia B sea válida y no haya excedido su cuota. Puedes probarla manualmente:curl "https://api.themoviedb.org/3/movie/550?api_key=TU_KEY" - Configuración de Categorías: Verifica en el Panel de Staff que la categoría del torrent tiene activado “Movie Meta” o “TV Meta”. Si está desactivado, el scraper ni siquiera se iniciará.

- Conectividad de Red: Al ser una VM en Proxmox, asegúrate de que tiene salida a internet para consultar

api.themoviedb.orgeimage.tmdb.org. - Sincronización de Base de Datos: Si las tablas

tmdb_moviesestán vacías tras subir torrents, el problema está en los pasos 1 o 2.

Fin del Manual de Configuración. Auditoría de Metadatos completada.

Meilisearch setup for UNIT3D

Note: This guide assumes you are using a sudo user named ubuntu.

1. Install and configure Meilisearch

-

Install Meilisearch:

sudo curl -L https://install.meilisearch.com | sudo sh sudo mv ./meilisearch /usr/local/bin/ sudo chmod +x /usr/local/bin/meilisearch -

Set up directories:

sudo mkdir -p /var/lib/meilisearch/data /var/lib/meilisearch/dumps /var/lib/meilisearch/snapshots sudo chown -R ubuntu:ubuntu /var/lib/meilisearch sudo chmod -R 750 /var/lib/meilisearch -

Generate and record a master key:

Generate a 16-byte master key:

openssl rand -hex 16Record this key, as it will be used in the configuration file.

-

Configure Meilisearch:

sudo curl https://raw.githubusercontent.com/meilisearch/meilisearch/latest/config.toml -o /etc/meilisearch.toml sudo nano /etc/meilisearch.tomlUpdate the following in

/etc/meilisearch.toml:env = "production" master_key = "your_16_byte_master_key" db_path = "/var/lib/meilisearch/data" dump_dir = "/var/lib/meilisearch/dumps" snapshot_dir = "/var/lib/meilisearch/snapshots" -

Create and enable service:

sudo nano /etc/systemd/system/meilisearch.serviceAdd the following:

[Unit] Description=Meilisearch After=systemd-user-sessions.service [Service] Type=simple WorkingDirectory=/var/lib/meilisearch ExecStart=/usr/local/bin/meilisearch --config-file-path /etc/meilisearch.toml User=ubuntu Group=ubuntu Restart=on-failure [Install] WantedBy=multi-user.targetEnable and start the service:

sudo systemctl enable meilisearch sudo systemctl start meilisearch sudo systemctl status meilisearch

2. Configure UNIT3D for Meilisearch

-

Update

.env:sudo nano /var/www/html/.envAdd the following:

SCOUT_DRIVER=meilisearch MEILISEARCH_HOST=http://127.0.0.1:7700 MEILISEARCH_KEY=your_16_byte_master_key -

Clear configuration and restart services:

sudo php artisan set:all_cache sudo systemctl restart php8.3-fpm sudo php artisan queue:restart

3. Maintenance

-

Reload data and sync indexes:

-

Sync index settings:

After UNIT3D updates, sync the index settings to ensure they are up to date:

sudo php artisan scout:sync-index-settings -

Reload data:

Whenever Meilisearch is upgraded or during the initial setup, the database must be reloaded:

sudo php artisan auto:sync_torrents_to_meilisearch --wipe && sudo php artisan auto:sync_people_to_meilisearch

-

See also

For further details, refer to the official Meilisearch documentation.

Telegram Integration

This page documents the full Telegram bot integration for UNIT3D trackers: deep-link account linking, queued torrent notifications, automatic kick on ban, and group invite flow.

Important

The webhook endpoint requires an HTTPS domain. Telegram does not deliver webhooks to plain HTTP URLs.

1. Prerequisites

- A Telegram bot created via @BotFather.

- A Telegram supergroup (not a regular group) with the bot added as an administrator. The bot must have Ban users and Invite users via link permissions.

- Your UNIT3D instance must be accessible over HTTPS.

- Redis must be configured as the Laravel queue backend (the notification job is queued, not synchronous).

2. Environment Variables

Add the following variables to your .env file:

TELEGRAM_BOT_TOKEN=123456789:ABCDefGHIjklMNOpqrsTuvWXyz_1234567890

TELEGRAM_BOT_USERNAME=your_bot_username

TELEGRAM_GROUP_ID=-100XXXXXXXXXX

TELEGRAM_TOPIC_NOVEDADES=6

TELEGRAM_GROUP_INVITE_LINK=https://t.me/+XXXXXXXXXXXXXX

| Variable | How to obtain |

|---|---|

TELEGRAM_BOT_TOKEN | @BotFather → /newbot or /token |

TELEGRAM_BOT_USERNAME | The bot’s @username without the @ |

TELEGRAM_GROUP_ID | Add @raw_data_bot to your group, send any message; the chat ID is shown (starts with -100) |

TELEGRAM_TOPIC_NOVEDADES | In a forum-enabled supergroup, right-click the announcements topic → Copy Link → the number after the last / |

TELEGRAM_GROUP_INVITE_LINK | Generate via API: curl "https://api.telegram.org/bot<TOKEN>/createChatInviteLink" -d "chat_id=<GROUP_ID>" |

3. Configuration

The config/services.php file maps these variables to the telegram service key:

'telegram' => [

'token' => env('TELEGRAM_BOT_TOKEN'),

'chat_id' => env('TELEGRAM_GROUP_ID'),

'topic_id' => env('TELEGRAM_TOPIC_NOVEDADES'),

'bot_username' => env('TELEGRAM_BOT_USERNAME'),

'group_invite_link' => env('TELEGRAM_GROUP_INVITE_LINK'),

],

All application code reads these values via config('services.telegram.*').

4. Database Migration

Run the migration to add the required columns to the users table:

php artisan migrate

This adds:

| Column | Type | Constraints |

|---|---|---|

telegram_chat_id | BIGINT | nullable, unique |

telegram_token | VARCHAR(64) | nullable, unique |

5. Webhook Setup

Register the webhook with Telegram so updates are delivered to your application:

curl -X POST "https://api.telegram.org/bot<YOUR_BOT_TOKEN>/setWebhook" \

-H "Content-Type: application/json" \

-d '{"url": "https://your-domain.tld/api/telegram/webhook"}'

Verify the webhook is active:

curl "https://api.telegram.org/bot<YOUR_BOT_TOKEN>/getWebhookInfo"

The response should show "url" set to your endpoint and "pending_update_count": 0.

Important

The webhook route at

POST /api/telegram/webhookintentionally excludes thethrottle:api,auth:api, andbannedmiddleware. Telegram’s servers must be able to reach it without authentication.

6. User Flow: Linking an Account

Linking works via a deep-link handshake using a TRK- prefixed token:

- The user navigates to Notification Settings in the tracker UI.

- A unique token of the form

TRK-+ 32 random alphanumeric characters (36 characters total) is displayed alongside a Link with Bot button. - Clicking the button opens a

t.me/<bot_username>?start=TRK-xxxxxdeep link in Telegram. - The bot receives a

/start TRK-xxxxxmessage. - The webhook controller looks up the token in the database using a pessimistic lock (

lockForUpdate()) inside a transaction to prevent race conditions. - On success,

telegram_chat_idis set to the user’s Telegram chat ID, andtelegram_tokenis cleared tonull(one-time use). - The bot sends a confirmation message. If

TELEGRAM_GROUP_INVITE_LINKis configured, an inline keyboard button to join the group is included.

Important

Each token is single-use. Once linked, the token is cleared. Users must reset their token to re-link.

7. User Flow: Bot Commands

The bot handles three commands after the webhook is active:

| Command | Behaviour |

|---|---|

/start | Without a token: shows a welcome message. With TRK- token: performs the account linking handshake. |

/status | Replies with link status. If linked, shows the username and optionally an inline group invite button. |

/help | Lists the available commands. |

8. Admin Flow: Ban → Kick

When a staff member bans a user via the BanController, the following happens automatically if the user has a linked Telegram account:

TelegramService::kickUser()is called with the user’stelegram_chat_id.- The service calls the Telegram API

banChatMember(withrevoke_messages: true). - Immediately after a successful ban, it calls

unbanChatMember(withonly_if_banned: true). - This produces a clean kick: the user is removed from the group but is not permanently banned and can rejoin via a new invite link.

- Both

telegram_chat_idandtelegram_tokenare then cleared tonullon the user record.

9. Torrent Notifications

When a torrent’s moderation status transitions to APPROVED, TorrentObserver dispatches a SendTelegramNotification job:

SendTelegramNotification::dispatch($torrent, $torrent->user);

The job has the following retry configuration:

| Property | Value |

|---|---|

$tries | 3 |

$backoff | [10, 60, 300] seconds |

$timeout | 30 seconds |

The notification is sent as a sendPhoto API call to the configured group and topic, with an inline keyboard containing Download, IMDb, TMDb, and Trailer buttons. Poster URL is resolved from the linked movie or TV record. Trailer URL supports both a raw YouTube ID and [youtube]ID[/youtube] tags in the torrent description.

The queue worker processes these jobs. The worker runs in the worker container.

10. Token Management

Reset via UI

Users can reset their Telegram link from the Notification Settings page. Resetting:

- Clears

telegram_chat_idfrom the user record. - Generates a new

TRK-token. - If the user was previously linked, sends a “disconnected” message to their Telegram account and kicks them from the group.

Bulk token provisioning

To generate tokens for all users who do not yet have one:

docker compose exec app php artisan telegram:generate-tokens

Add --force to skip the confirmation prompt:

docker compose exec app php artisan telegram:generate-tokens --force

11. Docker Notes

Important

After any code change to jobs, services, or controllers related to Telegram, restart the queue worker so it picks up the updated class definitions:

docker compose restart worker

Failure to restart the worker means the running process continues to execute the old code from memory.

12. Troubleshooting

Webhook returns 500

The webhook route excludes authentication middleware deliberately. If you receive 500 errors, check that no upstream middleware (e.g., a reverse proxy WAF rule) is rejecting the request before it reaches Laravel. Verify with:

docker compose logs worker | tail -20

Bot commands return no response

Check that the bot token and chat ID are loaded correctly:

docker compose exec app php artisan route:list | grep telegram

Confirm the route exists, then verify the .env values are not empty:

docker compose exec app php artisan tinker --execute="dd(config('services.telegram'));"

Notifications are silent (no Telegram message on approval)

- Confirm the queue worker is running:

docker compose ps worker. - Check for failed jobs:

docker compose exec app php artisan queue:failed. - Confirm

TELEGRAM_BOT_TOKENandTELEGRAM_GROUP_IDare set and non-empty. - Check application logs:

docker compose logs app | grep -i telegram.

Token already used / not found

Each TRK- token is single-use and is cleared on successful linking. If a user sees “token not found”, they must reset their token from the Notification Settings page to generate a new one.

Google Drive Backup Sync

This page covers the rclone-based layer that uploads local UNIT3D snapshots to Google Drive with transparent encryption. It complements the built-in Laravel backup tool described in Backups, which creates the local snapshots that this sync layer uploads to the cloud.

1. Purpose and architecture

Local snapshots produced by php artisan backup:run accumulate in backups/. The rclone sync layer picks up that directory and mirrors it to a Google Drive crypt remote (gdrive_crypt:), encrypting file names and contents transparently so raw Google Drive access never exposes backup data.

The sync runs as an ephemeral Docker container defined in rclone_gdrive/docker-compose.yml. The container starts, performs the sync, and is destroyed (--rm). No long-running rclone process is kept alive.

backups/ (local snapshots, read-only mount)

└──► rclone_sync container (ephemeral)

└──► gdrive_crypt: remote (Google Drive, encrypted)

2. Prerequisites

- Docker and Docker Compose available on the host (the sync runs inside a container — no host-level rclone installation required).

- A Google Drive OAuth app configured in rclone (

rclone config), producing a remote namedgdrive_cryptof typecryptbacked by a plaingdriveremote. - A completed

rclone_gdrive/config/rclone.conffile containing thegdriveandgdrive_cryptremote definitions.

Important

rclone_gdrive/config/rclone.confis git-ignored. You must generate it manually on each host usingrclone configand place it at that path before running any sync or restore command.

3. Configuration reference

File: rclone_gdrive/docker-compose.yml

| Option | Value | Effect |

|---|---|---|

| Image | rclone/rclone:latest | Official rclone image |

| Source mount | /home/rawserver/UNIT3D_Docker/backups → /data (read-only) | Local snapshots, never modified |

| Config mount | ./config → /config/rclone | Provides rclone.conf to the container |

| Log mount | ./logs → /logs | Persists sync logs on the host |

--drive-chunk-size | 1024M | Large chunks avoid Google Drive upload timeouts on big archives |

--transfers | 4 | Parallel file transfers |

--checkers | 8 | Parallel file-existence checks |

--delete-after | (flag) | Deletes destination files only after all transfers complete successfully |

-v | (flag) | Verbose logging written to --log-file |

| Log file | /logs/sync_execution.log | Mapped to rclone_gdrive/logs/sync_execution.log on the host |

The sync subcommand is used, meaning the destination mirrors the source exactly (files removed locally will eventually be removed from the cloud after --delete-after processing).

4. Running a sync

Use the wrapper script to run a sync:

cd /home/rawserver/UNIT3D_Docker/rclone_gdrive

bash scripts/run_sync.sh

The script:

- Changes into the project directory (

rclone_gdrive/). - Appends a timestamped start entry to

logs/cron_wrapper.log. - Runs

docker compose run --rm rclone_sync(ephemeral — container is destroyed on exit). - Appends a success or error entry to

logs/cron_wrapper.logbased on the exit code. - Exits with the same exit code as the rclone process.

Important

The script must be run from a user that has permission to call

docker compose. Ensure the user is in thedockergroup or usesudo.

5. Cron setup

Add an entry to the crontab of the user that has Docker access. For example, to sync daily at 07:00:

crontab -e

0 7 * * * /home/rawserver/UNIT3D_Docker/rclone_gdrive/scripts/run_sync.sh

The wrapper script writes its own timestamped log to rclone_gdrive/logs/cron_wrapper.log, so cron output redirection is optional. Detailed per-file rclone output goes to rclone_gdrive/logs/sync_execution.log.

6. Restore procedure

Use the restore script to download and decrypt a specific backup from Google Drive:

cd /home/rawserver/UNIT3D_Docker/rclone_gdrive

bash scripts/restore_snapshot.sh

The script runs interactively:

- Lists all top-level directories in

gdrive_crypt:/so you can see what snapshots are available. - Prompts for the exact folder name to restore (example:

snapshot_2026-03-19_0600). - Creates the local destination directory at

/home/rawserver/UNIT3D_Docker/restauracion_emergencia/<TARGET>. - Downloads and decrypts the snapshot using

rclone copy gdrive_crypt:/<TARGET>with--drive-chunk-size 1024M. - Reports completion and lists the restored files with sizes.

Important

The restore destination is

/home/rawserver/UNIT3D_Docker/restauracion_emergencia/. Files are decrypted transparently by rclone using thegdrive_cryptremote definition inrclone.conf. After restore, follow the procedures in Backups — Restoring a backup to apply the snapshot to the running application.

7. Logs

| File | Contents |

|---|---|

rclone_gdrive/logs/cron_wrapper.log | Timestamped start/success/error lines written by run_sync.sh |

rclone_gdrive/logs/sync_execution.log | Verbose per-file rclone output written by the container (-v --log-file) |

To follow a running sync in real time:

tail -f /home/rawserver/UNIT3D_Docker/rclone_gdrive/logs/sync_execution.log

To review the cron history:

cat /home/rawserver/UNIT3D_Docker/rclone_gdrive/logs/cron_wrapper.log

Server management

Important

The following assumptions are made:

- You have one

rootuser and one regular user with sudo privileges on the dedicated server.- The regular user with sudo privileges is assumed to have the username

ubuntu.- The project root directory is located at

/var/www/html.- All commands are run from the project root directory.

1. Elevated shell

All SSH and SFTP operations should be conducted using the non-root user. Use sudo for any commands that require elevated privileges. Do not use the root user directly.

2. File permissions

Ensure that everything in /var/www/html is owned by www-data:www-data, except for node_modules, which should be owned by root:root.

Set up these permissions with the following commands:

sudo usermod -a -G www-data ubuntu

sudo chown -R www-data:www-data /var/www/html

sudo find /var/www/html -type f -exec chmod 664 {} \;

sudo find /var/www/html -type d -exec chmod 775 {} \;

sudo chgrp -R www-data storage bootstrap/cache

sudo chmod -R ug+rwx storage bootstrap/cache

sudo rm -rf node_modules && sudo bun install && sudo bun run build

3. Handling code changes

PHP changes

If any PHP files are modified, run the following commands to clear the cache, restart the PHP-FPM service, and restart the Laravel queues:

sudo php artisan set:all_cache && sudo systemctl restart php8.3-fpm && sudo php artisan queue:restart

Static assets (SCSS, JS)

If you make changes to SCSS or JavaScript files, rebuild the static assets using:

bun run build

4. Changing the domain

-

Update the environment variables:

Modify the domain in the

APP_URLandMIX_ECHO_ADDRESSvariables within the.envfile:sudo nano ./.env -

Refresh the TLS certificate:

Use

certbotto refresh the TLS certificate:certbot --redirect --nginx -n --agree-tos --email=sysop@your_domain.tld -d your_domain.tld -d www.your_domain.tld --rsa-key-size 2048 -

Update the WebSocket configuration:

Update all domains listed in the WebSocket configuration to reflect the new domain:

sudo nano ./laravel-echo-server.json -

Restart the chatbox server:

Reload the Supervisor configuration to apply changes:

sudo supervisorctl reload -

Compile static assets:

Rebuild the static assets:

bun run build

5. Meilisearch maintenance

Refer Meilisearch setup for UNIT3D, specifically the maintenance section, for managing upgrades and syncing indexes.

UNIT3D open source: how to share your source code

A guide by EkoNesLeg

1. Introduction

As part of complying with the GNU Affero General Public License (AGPL), sites that modify and distribute UNIT3D are required to share their source code. This guide provides an easy process for creating a sanitized tarball of your modified source code and encourages you to create and update an “Open Source” page on your site to make this code available.

2. Setting up tarball creation

2.1 Exclude sensitive files

To create a tarball that includes only the modified source code and excludes sensitive files like configuration data, you can take advantage of the existing .gitignore file in your UNIT3D deployment. Here’s how:

-

Reference

.gitignorefor exclusions:If your production environment has the original

.gitignorefile that already lists the files and directories you don’t want to include in version control, you can use it to exclude those same items from your tarball:ln -s /var/www/html/.gitignore /var/www/html/.tarball_exclude -

Additional exclusions (if needed):

If additional exclusions are needed, or if you’ve removed the git environment from your production environment, you should manually add the exclusions to the

.tarball_excludefile:nano /var/www/html/.tarball_excludeAdd the following to the file:

.env

node_modules

storage

vendor

public

*.gz

*.lock

UNIT3D-Announce

unit3d-announce

laravel-echo-server.json

config

.DS_Store

.idea

.vscode

nbproject

.phpunit.cache

.ftpconfig

storage/backups

storage/debugbar

storage/gitupdate

storage/*.key

laravel-echo-server.lock

.vagrant

Homestead.json

Homestead.yaml

npm-debug.log

_ide_helper.php

supervisor.ini

.phpunit.cache/

.phpstan.cache/

caddy

frankenphp

frankenphp-worker.php

data

config/caddy/autosave.json

build

bootstrap

*.sql

*.DS_Storecomposer.lock

*.swp

coverage.xml

cghooks.lock

*.pyc

emojipy-*

emojipy.egg-info/

lib/js/tests.html

lib/js/tests/npm-debug.log

2.2 Create the tarball

-

Create a script to generate the tarball:

nano /var/www/html/create_tarball.sh -

Add the following content to the script:

#!/bin/bash TARBALL_NAME="UNIT3D_Source_$(date +%Y%m%d_%H%M%S).tar.gz" TAR_EXCLUDES="--exclude-from=/var/www/html/.tarball_exclude" tar $TAR_EXCLUDES -czf /var/www/html/public/$TARBALL_NAME -C /var/www html # Create a symlink to the latest tarball ln -sf "/var/www/html/public/$TARBALL_NAME" "/var/www/html/public/UNIT3D_Source_LATEST.tar.gz" -

Make the script executable:

chmod +x /var/www/html/create_tarball.sh -

Run the script manually whenever you update your site:

/var/www/html/create_tarball.sh

3. Creating and updating the “Open source” Page

-

Create an “Open source” page:

Go to your site’s

/dashboard/pagessection and create a new page called “Open source.” -

Add the following Markdown content to the page: